This is a follow-up to my previous post, AI Slop: A Perspective. Both of them are mostly me thinking aloud about the issues. I’m not an expert on AI (though I have a close friend who is, and who has helped me considerably with both technical advice and personal perspectives). I do know a decent amount about novels, having read and reviewed more than a thousand of them just since 2014, when I started reviewing everything I read, and I’ve published sixteen of my own and spent a good deal of time thinking about the craft of creating them.

This post is one novelist and reviewer’s take on the current state of AI as at February 2026, especially as it applies to the idea of using AI to write novels (or create art in general), and ends with a realistic suggestion of what you personally might do about it all. It’s a field which is constantly and rapidly changing, and even if I present insights that are true today, they may not continue to be true.

AI and Me

Let’s start with some background on my current use of AI, which is minimal, and why I don’t use it more.

I use AI at work sometimes (my day job is in technology), almost entirely to save time when I’m trying to figure out what mistake I’ve made in some code or an Excel formula. I’ve never used it to write, and don’t intend to, but I have used it for cover art once, on my most recently published novel. I made that decision because I knew I wasn’t going to sell many copies, and the guy who usually does my covers charges several hundred US dollars that I knew I wouldn’t get back in the near future, if at all. To be clear, his covers are great, and he absolutely charges a fair price for them, but I couldn’t justify spending that money in that specific instance.

I’ve never felt happy about that decision, and in future I plan to go back to what I’ve done previously for projects that I expect to not be profitable: using GIMP and royalty-free source images. I will probably replace that AI cover when I have time to do so, as well.

I’ve thought about using AI to do the part I don’t enjoy, which is the marketing, but I wouldn’t trust an AI agent with an advertising budget, and there are three reasons I don’t do marketing: because I don’t enjoy it, because I’m bad at it, and because I don’t like the way I have to pay Amazon now to get them to promote my books to people who would enjoy it. I’m stubborn that way, and since I have a day job and don’t have to make a living out of my writing, or indeed any money at all, I can afford to stand on principle and sell fewer books as a result. Other people are not so fortunate, and have to work with the market they have, not the market they wish they had.

So why am I not using AI? My concerns are these:

- Environmental impact. The nature of how AI works means it needs large datacentres that need a lot of power and then a lot of cooling as the chips heat up, to prevent them from melting themselves down, and both of these things have an environmental impact. This, of all times, is not the moment to be having that! This by itself should mean we don’t support the widespread use of AI!

AI datacentres, specifically, use a lot of power and water, for technical reasons to do with the amount of computing power being applied. I’m told that an AI query uses about three times the energy of a Google search query.

Sure, maybe fusion power is coming (it has been for decades, though it’s seeming closer than ever to actually happening), but even if you’re generating effectively unlimited power cheaply and cleanly – and, as yet, we’re not – the waste heat still has to go somewhere, and you still need a lot of water to do the cooling, and clean water is a scarce resource that actual people need in order to live, and that is made into not-clean water (and often evaporated entirely) by the cooling process. Yes, you could power the datacentres with renewables, which have no net heat effect, since the generation takes heat out of the environment in order to turn it into power. Yes, you could heat homes with the waste heat; it’s being done in Scandinavia. Yes, you could recycle the water, though it makes the cooling more costly. There are mitigations that can be put in place. But they’re mostly not in place yet, largely because most AI datacentres are in the US, where regulations don’t require the mitigations and the power generation mix includes a lot of fossil fuels still. This alone makes AI use ethically dubious unless there’s a significant upside that you can’t otherwise get in order to balance the downside. Sometimes there is, but I don’t think generating a cover for my book is one of those times. - Wholesale piracy of intellectual property. It’s quite likely that Meta’s AI has been trained on pirated copies of several of my books, along with thousands of others that they could certainly have afforded to pay for. I’m not going to go into the argument about how the collective creative output of humanity up until now is inherently the source for any new creativity, and therefore the AI trainers were engaging in “fair use” when they used copyright works; I don’t agree with it, but I’m not going to die on that hill. I do resent the outright piracy, though.

- I don’t trust big tech companies to behave ethically in any way unless it happens to coincide with what will get them the most profit, and I think their record bears me out here. Social media was honestly never great, and is now thoroughly awful thanks to profit-led decisions by the exact people who are developing AI, or people who are very like them. AI has the potential to be even more awful, and I don’t want to be locked into it when that happens.

Anthropic does seem to be trying harder than the other companies not to be evil, but we saw how it went when Google announced that as a goal. And they’ve settled for over a billion dollars with some authors whose books they pirated. - The development issue I mentioned in my earlier article – fewer people being willing to make things that AI could currently make better than them, in order to learn how to do it and eventually reach the point where they can make things that AI could never make. That’s a big problem for humanity, which is already affecting real people (junior developers, who are finding it hard to get work that would eventually turn them into senior developers, who in turn are, despite the hype of some AI moguls, still people we very much need).

The Work of Art

But maybe a lot of people would never get good enough to make things an AI couldn’t make? Most people have average ability or close to it, after all. The thing is, in writing as in anything else, you level up by grinding, so even if you start out average, if you want to be above average and have any potential to be so, you will have to work at it. I linked in my last post to this video by Brandon Sanderson about the exact issue I’m discussing, in which he talks about how, as a young man, he wrote a novel that sucked, knowing it was going to suck, in order to have the experience of writing a novel and learn something from the process. He learned enough that his next novel sucked less. By the time he wrote his sixth novel, it hardly sucked at all, to the point that he was able to get that one published, and he’s gone on getting better since. This is because he thinks about his craft as a craft, and works hard to do it as well as he’s capable of. He’s now very good, and has made a lot of money doing it – which is not an inevitable outcome, nor is it necessarily mainly because he works so hard at his craft, though I’m sure that plays a role.

There will always be people who enjoy making art for the sake of the process, for the enjoyment of the craft, and that’s not going away. We’re now in the third century of the industrial era, in an age when anything you can imagine can be made by a machine, and plenty of people still make, and buy, handcrafts.

Unfortunately, there’s not always a correlation between producing good art and enjoying the process; some good artists hate the process and find it incredibly painful, but go through it anyway because they’re driven to create or for some other reason, like it’s what they’re good at and can make a living doing. One of the fears about AI art is that some of them may not be able to make that living anymore, and then we lose them as artists. Is the gain of having all the AI artists instead worth it to us collectively? What about the impact it has on the humans who aren’t able to make a living making art anymore? What are they going to do instead? In some cases, something they struggle with less, but in other cases, something they struggle with more. And I think the latter will outweigh the former.

This is far from the first time technology has caused this sort of transition, of course, and the world is somehow still functioning. A century ago, the introduction of recorded music as soundtracks for silent films took work away from a lot of musicians, including my grandmother, who played the piano for the silent movie theatre. She probably didn’t get well paid for it, but with two small boys and her husband employed as an engine driver, any extra would have been welcome. Then there’s the transition from artists grinding their own pigments to buying them from colourmen. I’m sure someone railed against that, but not every process has to be done by hand. Still, that’s not to dismiss genuine concerns about the transition that AI will bring; it will cause, has already caused, real harm to real people, whatever benefits it ends up having in the long term.

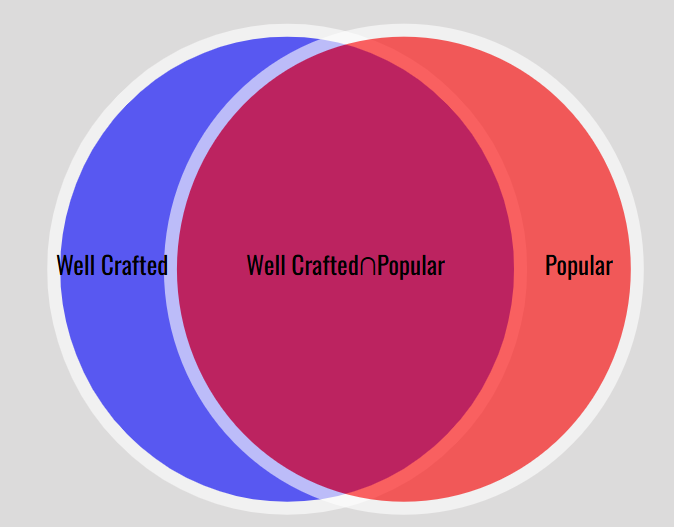

There’s a Venn diagram of art, in which the set of art that shows good craft and the set of art that appeals to a wide audience overlap, but are not by any means identical. Brandon Sanderson’s novels, and a good many other people’s, are in the overlap, but there are ones that gain a wide audience and don’t have good craft (such as Twilight), and ones that have good craft and don’t gain a wide audience, like a lot of the heavier Russian novels, Kafka, or Finnegans Wake. There are various reasons why good art doesn’t get an audience, whether it’s because it’s ahead of its time, difficult, uncomfortable, or just for reasons of discoverability, of which more later.

Let’s not, by the way, get into the gatekeeping discussion of what is and isn’t art, or at what point it’s so poorly crafted it isn’t art anymore. I’m calling it all art.

So there’s a parallel discussion to be had about commercialism in art. There are people who are only producing art because they make money at it. There are people who would, perhaps do, pay to make art because they love the process and/or the product so much. But the art in the middle, that’s produced both because the artist wants to spend time making art and also because they can make some money out of it and so justify, or even just afford, spending that time not doing something else – that’s where the pinch comes if it’s cheap and easy to make poorly-crafted art, some of which is commercial and replaces the work of people who used to make art for a living.

And there will always be people who, for the sake of making money, will produce something that they don’t care about by a process they also don’t care about, even a process that harms someone else. If that’s easy and has any chance of being profitable (in social capital or actual money, and these days on social media the two are linked), there will be a lot of such people, and most of them will inevitably do it badly.

The Dunning-Kruger Effect applies, too: There are people who are bad at art and don’t know it, so they won’t improve. (If you do know you’re currently bad at it, you’re in Brandon Sanderson’s position when he was writing bad novels in order to learn and get better.) AI is an amplifier; if you’re bad at art, you can easily make more bad art than ever before, and you probably won’t even recognize it as bad. The LLM certainly won’t tell you.

When I use the shorthand word “bad” here, I’m talking about the “poorly crafted” part of the Venn diagram above. In the case of fiction, I mean that they don’t know the basic mechanics of writing (like grammar, usage and punctuation); don’t understand the world we live in well enough to build a fictional one; don’t know how to create a plot without forcing it along through coincidence or out-of-character choices; can’t write believable or interesting or three-dimensional characters; or don’t express themselves clearly at a sentence level. All these are faults I see abundantly in books I review that are not produced with AI, even though I dodge a lot of worse ones by avoiding unoriginal premises and books with obvious errors in their blurbs. And most of these things, apart from basic mechanics, will not be helped by LLMs in their current state. (Pasting your book into Google Docs, which is free, and following the suggestions will improve your basic mechanics well past the standard I see in many recent books I’ve reviewed, by the way. The suggestions aren’t always correct, but they are often enough correct that on average, following them will improve your document. The spelling and grammar checker is, of course, an LLM.) Not expressing yourself clearly, in particular, is likely to give you an outcome you weren’t looking for if you use an LLM.

Now, maybe a good artist could use AI to amplify their abilities too. Coders who are seeing the most gain are the ones with good engineering discipline, who are already used to using precise language (code) to make it clear what they want.

But see my concerns above, which hold me back from experimenting in that direction – even if the creative writing field of which I’m part didn’t have rules against using generative AI for things like short stories to be published in the major magazines. I could do it for my self-published novels, because honestly if people shun me for it I’ll hardly notice the difference, but, again – concerns. And even if those concerns didn’t exist, I don’t really see where the effort I would put in learning to use the tools would gain me anything I valued. I enjoy the process of writing; that’s largely why I do it. There aren’t parts of it I want done for me. I even enjoy the sanding.

Not to mention that, in the US at least, there is no copyright protection for AI-generated works. Copyright requires a human creator, and even if you have done part of the work, copyright legally only applies to the part you did.

I have thought about converting my book The Well-Presented Manuscript, and the various craft posts I have here on my blog, into an AI tutor or coach for human writers, to help them get better quicker. It’s all my own IP, after all, and I can do what I want with it. But my other concerns about AI are too strong; it would directly compete with human editors; and besides, I don’t have the time. Building a tool like that is very time-consuming, contrary to the popular conception of how easy it is to create useful things with AI.

I don’t know if that will continue to be my position. Maybe I will try it someday; maybe my concerns will be mitigated, or there’ll be a use case that’s compelling enough for me to overcome them.

In the meantime, don’t expect me to talk a whole lot more about AI after this post, because as someone who deliberately doesn’t use it I’m in no position to talk about it from the inside. I can talk about art, and speculate on AI’s impact on art, but the technology itself is something I’m not an expert on, and can’t become an expert on without immersing myself in it. I will be using more AI at work this year, mostly in the form of machine learning rather than LLMs, and if I learn anything I think is relevant to writing, I may post about it. We’ll see.

Slop-pocalypse?

So, the ease of making AI art could lead to a slop-pocalypse (more than there is already), though there are limitations on AI that may temper this problem. Most importantly, much of the real cost of operating these machines is currently being borne by venture capitalists in the hope of an eventual payoff, even though there’s no obvious business model; when the business model cuts in and it’s no longer cheap or free, that will drastically diminish the slop (while de-democritising the technology, so once again people who already have lots of resources will be able to do things that people who have fewer resources can’t). It will also, our experience with social media should have taught us, make AI utterly awful, and I think it has more potential to be awful even than social media, unless someone stops it. It’s pretty awful in places already; read up a bit about Grok if you aren’t already aware. My friend who I mentioned above is working on the governance side of AI, trying to be one of the people who stop it being awful, and I’m glad that’s happening, rather than just – as with social media – leaving it to people to sue after the harm has already been done. There are some very predictable harms that AI can produce and is, in some cases, already producing, and unless we collectively decide they’re not acceptable, we are going to have to live with them.

On the other hand, “slop-pocalypse” is probably too alarmist. Every new technology, and art form, has been condemned when it appears. Socrates feared that writing would harm human memory. Prose fiction, largely written by women, looked like a terrible, civilisation-ending thing in an age used to epic poetry by men. Yes, there will be negative impacts of AI. No, it isn’t going to destroy human writing, or human creativity. We’ve had electronic instruments for years that mimic the sound of a real instrument near-perfectly. People still play real instruments.

A few years ago, when indie fiction became a thing, the term “tsunami of crap” was bandied about a lot by people who feared that it would mean the end of publishing. And it did make discoverability harder, and there is a lot more bad writing available now. At least some of it sells, too, because, as I’ve noted, there is a difference between executing something well in a craft sense and making that something somehow appealing to consumers; this difference exists throughout the market, in traditional as well as indie publishing, of course. Ninety percent of everything is crap, and “everything” definitely includes traditional publishing, though the exact percentage may vary locally. What the so-called “tsunami of crap” didn’t mean was that there was no good indie writing produced, or no good writing produced at all. But it does make it harder to find, and so does the slop-pocalypse (so called).

So if discoverability is a problem, we owe it to each other to help discover good stuff – that’s partly why I review. Review books you like, especially obscure ones! Follow people who review things you like so you can find other things you like!

Of course, you’ll need to watch out for AI reviewers. I got five friend requests in a single day on my Goodreads profile recently, which is unusual, and when I looked at them, all five had profile pictures that had clearly been generated the same way, several of them were “friends” of each other, and they’d all reviewed the same three books, including one that I’d reviewed – hence, presumably, the friend request, to make them look more legit. The reviews all had that hard-to-define but easy-to-recognise smell of LLM. I reported them as review-farming bots, naturally.

But… find someone you’re confident is a person, follow their reviews, and get the books they recommend that sound like your thing. And then review them for someone else to find. We humans need to stick together.